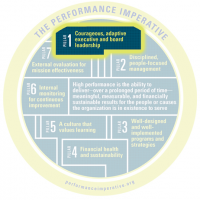

Principle 7.4: Leaders draw a clear distinction between outputs (e.g., meals delivered, youth tutored) and outcomes (meaningful changes in knowledge, skills, behavior, or status). Those who are working to improve outcomes commission evaluations to assess whether they are having a positive net impact. In other words, they want to know to what extent, and for whom, they’re making a meaningful difference beyond what would have happened anyway.

7.4.1: My organization’s internal performance data clearly distinguish between outputs and outcomes—and have been validated by independent experts.

7.4.2: My organization’s external evaluators use output data to help us learn about program quality and fidelity.

7.4.3: My organization’s external evaluators use outcome data to help us determine whether we’re making a difference beyond what would have happened anyway. This requires using a reliable research design to compare data from our participants with data from similar people who did not receive our services.